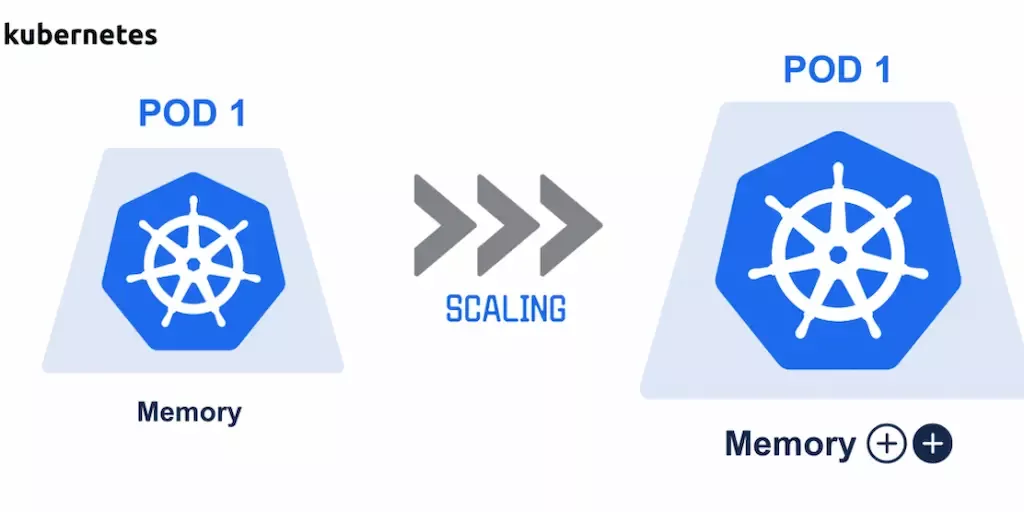

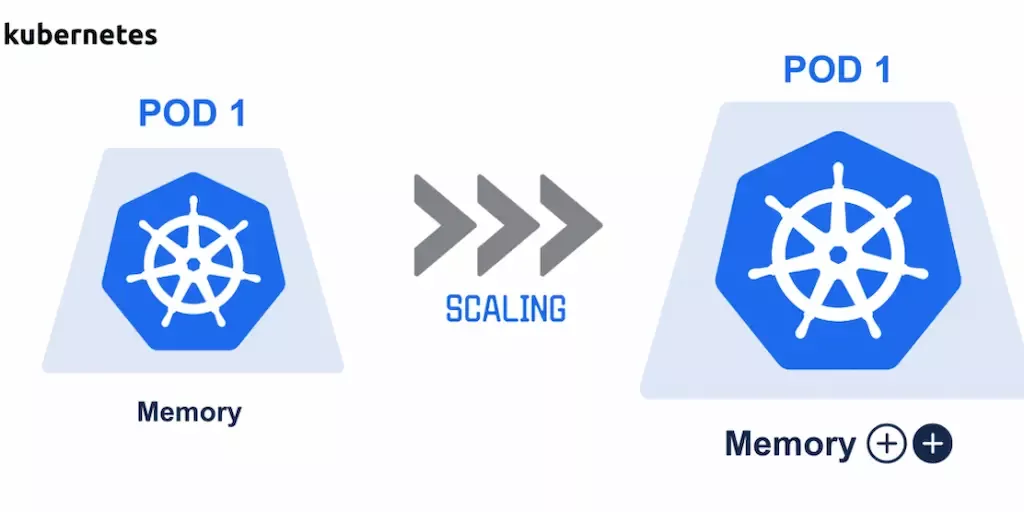

Kubernetes(K8s) Autoscaler — a detailed look at the design and implementation of VPA

The Vertical Pod Autoscaler (VPA) is an important part of cluster resource control in Kubernetes...

The Vertical Pod Autoscaler (VPA) is an important part of cluster resource control in Kubernetes (K8s). Its main purposes include:

- Updating the resources required by Pods (such as CPU and memory) automatically to lower the cost of cluster maintenance;

- Enhancing the utilization of cluster resources and reducing the risk of containers experiencing Out Of Memory (OOM) or CPU starvation in the cluster.

Here we take the VPA as a starting point to analyze the design and implementation principles of the VPA in Autoscaler . The source code for this article is based on Autoscaler HEAD fbe25e1 .

The overall architecture of Autoscaler VPA

Autoscaler VPA automatically adjusts the Pod's resource requirements based on the Pod's actual resource usage. It implements VPA by defining the VerticalPodAutoscaler CRD. In simple terms, the CRD defines which Pods (selected by Label Selector) use which update policy to update resource values that are computed in certain resources policy.

The VPA of Autoscaler is implemented by the following components coordinately.

- Recommender: Responsible for calculating the recommended resource values for all Pods managed under each VPA object.

- Admission Controller: Responsible for intercepting all Pod creation requests and refilling the resource value fields of the Pod according to the VPA object it belongs to.

- Updater: Responsible for the real-time update of Pod resources.

Recommender

The VPA Recommender of Autoscaler is deployed as a Deployment in Kubernetes. In the spec of the VerticalPodAutoscaler CRD, one or more VPA Recommenders can be specified through the Recommenders field (by default, the VPA Recommender named default is used).

The core structure of the VPA Recommender includes:

- ClusterStaterepresents the state of all objects in the cluster, mainly including the state of Pods and VPA objects, acting as alocal cache;

- ClusterStateFeederdefines a series of methods for acquiring the state of cluster objects or resources, and these acquired states are ultimately stored in

ClusterState.

The VPA Recommenderperforms a calculation of the recommended resource values periodically, with the execution cycle specified by the --recommender-interval parameter (default is 1 min). During execution, first, the VPA Recommender fully loads VPA, Pod resources, and real-time Metrics data into ClusterState through ClusterStateFeeder . Finally, the VPA Recommender writes the calculated Pod resource recommendation values into the VPA object.

The recommended value of Pod resources is calculated by the Estimator defined by Autoscaler VPA. The main calculation method revolves around the PercentileEstimator . The PercentileEstimator calculates and generates a distribution based on a set of historical resource states of each container within a Pod. Then it will take the resource value corresponding to a certain percentile point of this distribution (for example, the 95th percentile) as the final resource recommendation value.

Admission Controller

The VPA Admission Controller of Autoscaler is deployed as Deployments and by default provides HTTPS services as a Service named vpa-webhook in the kube-system namespace.

The VPA Admission Controller is mainly responsible for creating and starting the Admission Server, with the overall execution process as follows:

- Register Handlers for Pod and VPA objects, responsible forhandling requests for each object;

- Register a Calculator to obtain the resource recommendation values calculated in the Recommender;

- Register a Mutating Admission Webhook to intercept the creation requests of Pod objects and the creation and update requests of VPA objects.

For intercepted requests, the Admission Server calls the relevant Handler to refill the resource recommendation values calculated by the Autoscaler VPA Recommender into the original object fields using JSON Patch .

For example, the GetPatches method corresponding to the Handler of the Pod object is as follows:

// vertical-pod-autoscaler/pkg/admission-controller/resource/pod/handler.go

func (h *resourceHandler) GetPatches(ar *admissionv1.AdmissionRequest) ([]resource_admission.PatchRecord, error) {

raw, namespace := ar.Object.Raw, ar.Namespace

pod := v1.Pod{}

err := json.Unmarshal(raw, &pod)

// ...

controllingVpa := h.vpaMatcher.GetMatchingVPA(&pod) // Obtain the VPA resource that controls the specified Pod

patches := []resource_admission.PatchRecord{}

for _, c := range h.patchCalculators {

partialPatches, err := c.CalculatePatches(&pod, controllingVpa) // Return a patch based on the calculation method of each calculator

patches = append(patches, partialPatches...)

}

return patches, nil

}

Updater

The VPA Updater of Autoscaler is deployed as Deployment. The VPA Updater is used to determine which Pods need to be adjusted according to the resource recommendation values calculated by the VPA Recommender. The resource adjustment of Pods by the VPA Updater is carried out by evicting and rebuilding while also taking the Pod Disruption Budget into account.The VPA Updater itself does not have the capability to update resources. Instead, it is only responsible for evicting Pods and then creating a Pod again relying on the VPA Admission Controller to update the resource values.

Each time a resource update invokes the RunOnce method of the VPA Updater.This method enumerates each VPA resource and its corresponding Pods, selects the Pods that need resource updates in the current VPA, and evicts them one by one.

// vertical-pod-autoscaler/pkg/updater/logic/updater.go

func (u *updater) RunOnce(ctx context.Context) {

// ...

vpaList, err := u.vpaLister.List(labels.Everything()) // List all the VPA resources

vpas := make([]*vpa_api_util.VpaWithSelector, 0)

for _, vpa := range vpaList {

selector, err := u.selectorFetcher.Fetch(vpa)

vpas = append(vpas, &vpa_api_util.VpaWithSelector{

Vpa: vpa,

Selector: selector,

})

}

podsList, err := u.podLister.List(labels.Everything()) // List all the Pod resources

allLivePods := filterDeletedPods(podsList) // Filtering all the deleted Pods(DeletionTimestamp is not empty)

controlledPods := make(map[*vpa_types.VerticalPodAutoscaler][]*apiv1.Pod)

for _, pod := range allLivePods {

controllingVPA := vpa_api_util.GetControllingVPAForPod(pod, vpas) // Obtain the corresponding VPA resources of the current Pods

if controllingVPA != nil {

controlledPods[controllingVPA.Vpa] = append(controlledPods[controllingVPA.Vpa], pod)

}

}

for vpa, livePods := range controlledPods {

evictionLimiter := u.evictionFactory.NewPodsEvictionRestriction(livePods, vpa)

podsForUpdate := u.getPodsUpdateOrder(filterNonEvictablePods(livePods, evictionLimiter), vpa) // Identify Pods requiring resource updates for eviction

for _, pod := range podsForUpdate {

if !evictionLimiter.CanEvict(pod) { // Determine if eviction is feasible

continue

}

evictErr := evictionLimiter.Evict(pod, u.eventRecorder) // Conduct the eviction

}

}

}

Step 1: Update Priority

In the final step of the above-mentioned process, the VPA Updater returns a list of Pods needing resource updates through the getPodsUpdateOrder method. The Pods in this list are sorted in order of update priority, from highest to lowest.

The update priority of Pods is calculated using the GetUpdatePriority method. The return type, PodPriority , includes a ResourceDiff field, which represents thenormalized sum of all resource type differences (the absolute difference between the requested and recommended values). ResourceDiff serves as the standard for orderingwhen sorting Pods based on update priority.

// vertical-pod-autoscaler/pkg/updater/priority/priority_processor.go

func (*defaultPriorityProcessor) GetUpdatePriority(pod *apiv1.Pod, _ *vpa_types.VerticalPodAutoscaler, recommendation *vpa_types.RecommendedPodResources) PodPriority {

// ...

totalRequestPerResource := make(map[apiv1.ResourceName]int64) // Total value of requested resources, categorized by resource type

totalRecommendedPerResource := make(map[apiv1.ResourceName]int64) // Total value of recommended resources, categorized by resource type

for _, podContainer := range pod.Spec.Containers {

recommendedRequest := vpa_api_util.GetRecommendationForContainer(podContainer.Name, recommendation) // Acquire the corresponding value of the container

for resourceName, recommended := range recommendedRequest.Target {

totalRecommendedPerResource[resourceName] += recommended.MilliValue()

lowerBound, hasLowerBound := recommendedRequest.LowerBound[resourceName]

upperBound, hasUpperBound := recommendedRequest.UpperBound[resourceName]

// Assessment of various edge cases

}

}

resourceDiff := 0.0 // The sum of the differences across all resource types

for resource, totalRecommended := range totalRecommendedPerResource {

totalRequest := math.Max(float64(totalRequestPerResource[resource]), 1.0)

resourceDiff += math.Abs(totalRequest-float64(totalRecommended)) / totalRequest // Normalizing the differences for each resource type

}

return PodPriority{

ResourceDiff: resourceDiff,

// ...

}

}

Step 2: Eviction Event

For every Pod in need of resource value updates, the VPA Updater initially assesses its eligibility for eviction. Pods deemed evictable are subsequently evicted; those that are not, bypass the eviction process for that particular instance.

The determination of whether a Pod can be evicted by the VPA Updater is made using the CanEvict method.This method ensures that a Pod's corresponding Controller can only evict a number of Pod replicas within an acceptable tolerance, and this number of replicas is not zero (at least one).

// vertical-pod-autoscaler/pkg/updater/eviction/pods_eviction_restriction.go

func (e *podsEvictionRestrictionImpl) CanEvict(pod *apiv1.Pod) bool {

cr, present := e.podToReplicaCreatorMap[getPodID(pod)] // Locate the controller based on the Pod ID

if present {

singleGroupStats, present := e.creatorToSingleGroupStatsMap[cr]

if pod.Status.Phase == apiv1.PodPending {

return true // Pods in a Pending state are eligible for eviction

}

if present {

shouldBeAlive := singleGroupStats.configured - singleGroupStats.evictionTolerance // Controlled by the evictionToleranceFraction, it denotes the maximum number of replicas that can be evicted.

if singleGroupStats.running-singleGroupStats.evicted > shouldBeAlive {

return true // Pods within the tolerable limit for eviction are eligible to be evicted

}

if singleGroupStats.running == singleGroupStats.configured &&

singleGroupStats.evictionTolerance == 0 &&

singleGroupStats.evicted == 0 {

return true // If all Pods are running and the tolerable number for eviction is too small, only one Pod can be evicted

}

}

}

return false

}

The Evict function is responsible for evicting a Pod, which means sending an eviction request to the target Pod.

Summary

Autoscaler is a cluster auto-scaling tool library maintained by the Kubernetes community, and VPA (Vertical Pod Autoscaler) is just one of its modules. Now the implementations of VPA in many public cloud, such as that in Google Kubernetes Engine (GKE), are similar to Autoscaler's VPA implementation. However, comparing to Autoscaler , GKE has some following improvements:

- Additional consideration of the maximum number of nodes and single node resource limits when calculating resource recommendation values.

- The ability of VPA to inform the Cluster Autoscaler to adjust cluster capacity.

- Treating VPA as a control plane process, rather than as Deployments on worker nodes.

For the above-mentioned implementation methods, the VPA in Autoscaler is fundamentally based on the eviction and reconstruction of Pods. However, in certain scenarios where it's sensitive to eviction, Autoscaler may not be very suitable for VPA tasks. In such cases, a technology capable of updating Pod resources in-place is required.