How To Build a Platform on Kubernetes

When you’re building any type of internal platform for engineers, developers, and anyone else that’s...

When you’re building any type of internal platform for engineers, developers, and anyone else that’s part of your organization who needs it, the possibilities are endless. From deploying platforms on VMs to bare metal to container services like AWS ECS and everything in between.

The goal is to ensure that you make engineer's lives as efficient as possible with your platform, but there are multiple ways to do that.

In this blog post, you’ll learn about how to create the best version of a platform in one place instead of having to use multiple systems.

Why Kubernetes Is The Best Platform For Platform Engineering

As you build a platform for engineers/developers who have requested to use it, you’ll have a few different options to do so. It’ll require several pieces. Just to list a few out:

- An API

- A CLI

- A method of deploying resources

- A method to deploy clusters

- Management and deployment of containers

- IaC tools like Terraform

- Deploying and managing VMs

Although that seems like a lot, it’s the common “stack” for what’s used in many environments today.

With that list alone, you’re looking at roughly 6-8 different tools and an entirely different platform just to run VMs if you also have containers to deploy. That’s multiple tools, a full virtualized environment (ESXi, Hyper-V, etc.), and a container solution.

Using Kubernetes, you can have all methods in one place.

Operators

First, there are Operators. Operators consist of Kubernetes Custom Resource Definitions (CRD) and Kubernetes Controllers.

Kubernetes CRD’s are how the Kubernetes API is extended. By default, and by design, Kubernetes can have its API extended. The primary reason you’d want to do this is if Kubernetes doesn’t already have what you need. If you’ve ever wondered how a lot of vendors and open-source tools have the ability to be used within a Kubernetes Manifest, this is how. Typically, the extension is done in Go (golang) with a tool called Kubebuilder . There are several other clients available for other languages, but Go is the primary.

For example, you can create a new Type struct that contains the field you want available to use within your API.

type MikesAPISpec struct {

MikesPhoneNumber string `json:"mikesPhoneNumber,omitempty"`

MikesAge int `json:"mikesAge.omitempty"`

}

You can then use those fields within a Kubernetes Manifest.

apiVersion: simplyanengineer.com/v1

kind: MikesAPI

metadata:

name: mikesapi-sample

spec:

mikesPhoneNumber:

mikesAge:

You can then create a Controller (within Kubebuilder as well) for the reconciliation loop, which is how self-healing occurs.

type MikesAPIReconciler struct {

client.Client

Scheme *runtime.Scheme

}

With Operators, you can use Kubernetes to do anything you want from a programmatic perspective as long as an API exists, and if it doesn’t, you can create one.

Cluster API

The next step to the Platform Engineering puzzle on Kubernetes is with Cluster API. Cluster API is the ability to create and manage Kubernetes clusters with Kubernetes. You create Cluster API Providers the same way you’d create an Operator, with Kubebuilder.

You can see a full list of Cluster API Providers here: https://cluster-api.sigs.k8s.io/reference/providers.html

Although you can build Cluster API Providers, unless you’re working for an infrastructure/system vendor (Microsoft, Google, AWS, Digital Ocean, etc.), chances are you’ll be using Cluster API Providers instead of actually building them. That’s where the Cluster API Provider list above comes into play.

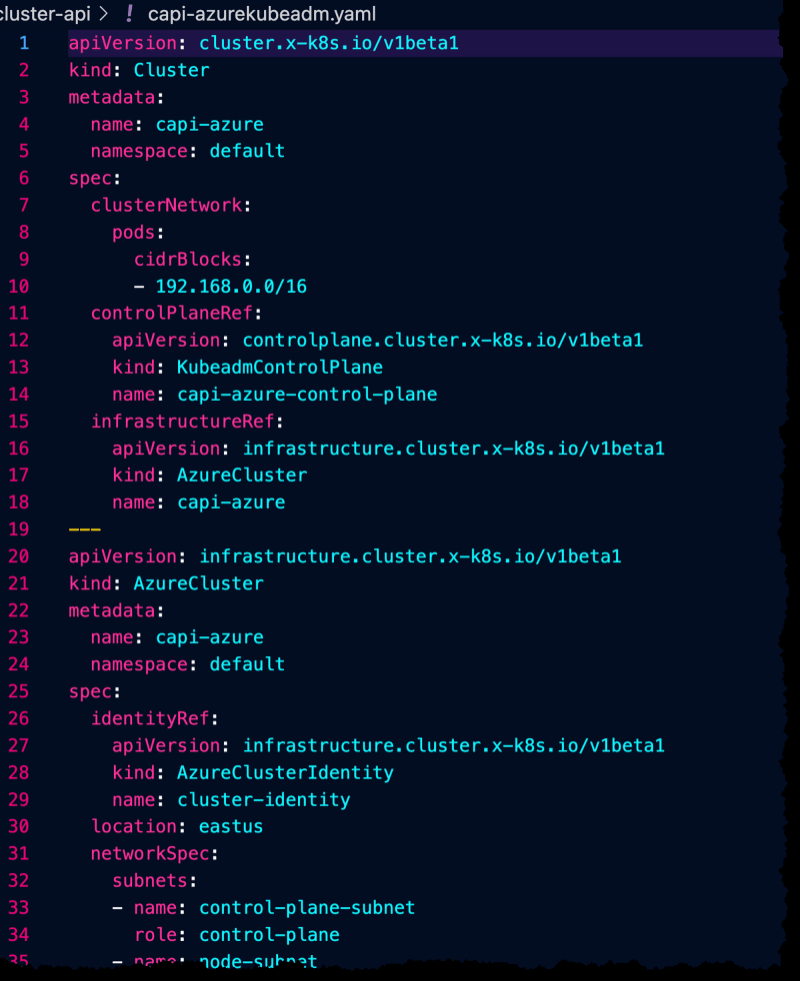

There’s several setup configurations need for the Provider and the ability for Kubernetes to authenticate to the Providers platform (Azure, AWS, etc.), but here’s an example of creating a Kubernetes cluster on Azure with VM’s boostrapped using Kubeadm.

First, you would specify environment variables to specify the environment size, resource group location, and region.

export AZURE_LOCATION="eastus"

export AZURE_CONTROL_PLANE_MACHINE_TYPE="Standard_D2s_v3"

export AZURE_NODE_MACHINE_TYPE="Standard_D2s_v3"

export AZURE_RESOURCE_GROUP="devrelasaservice"

Next, you’d generate the cluster configuration.

clusterctl generate cluster capi-azure --kubernetes-version v1.27.0 > capi-azurekubeadm.yaml

You’d then see a big Kubernetes Manifest that looks similar to the one below.

KubeVirt

The next piece of the puzzle are Virtual Machines (VM). Despite what we may say, VM’s are still very-much utilized in the majority of environments. Virtualization software like Hyper-V and ESXi still run through datacenters. Unless an organization is a startup or started their journey in the cloud, they have VM’s somewhere.

Luckily, Kubernetes gives you a way to manage VM’s as well.

The method feels a little odd at first, but it works beautifully.

To test this out, first download a Windows Server 2019 ISO (you can get a trial and not pay for it): https://info.microsoft.com/ww-landing-windows-server-2019.html

Next, create a Docker image via a Dockerfile from the ISO. The Docker image will contain the ISO.

FROM scratch

ADD 17763.3650.221105-1748.rs5_release_svc_refresh_SERVER_EVAL_x64FRE_en-us.iso /disk/

Build the Docker image and push it to Dockerhub. An example is below.

docker build -t adminturneddevops/winkube/w2k9_iso:sep2020 .

docker tag docker_id adminturneddevops/winkube:latest

docker push adminturneddevops/winkube:latest

The last step is to create a VM object/resource from the KubeVirt API via Kubernetes.

apiVersion: kubevirt.io/v1

kind: VirtualMachine

metadata:

generation: 1

labels:

kubevirt.io/os: windows

name: windowsonkubernetes

spec:

running: true

template:

metadata:

labels:

kubevirt.io/domain: vm1

spec:

domain:

cpu:

cores: 2

devices:

disks:

- cdrom:

bus: sata

bootOrder: 1

name: iso

- disk:

bus: virtio

name: harddrive

- cdrom:

bus: sata

readonly: true

name: virtio-drivers

machine:

type: q35

resources:

requests:

memory: 4096M

volumes:

- name: harddrive

persistentVolumeClaim:

claimName: winhd

- name: iso

containerDisk:

image: adminturneddevops/winkube:latest

- name: virtio-drivers

containerDisk:

image: kubevirt/virtio-container-disk

After a few minutes, you should be able to use VNC to connect to the VM.

kubectl virt vnc windowsonkubernetes

Crossplane

To tie the entire Platform Engineering capability together, there’s Crossplane. Crossplane gives you the ability to create resources outside of Kubernetes with Kubernetes. For example, you can create a Kubernetes Manifest that creates an S3 bucket in AWS or a vNet in Azure.

The same rules apply with Crossplane as they do with Cluster API. You can create your own Provider, but chances are you’ll be using a Provider that already exists.

Installing Crossplane

The first step is to install Crossplane. You can do so with Helm.

Add the Crossplane Repo

helm repo add crossplane-stable https://charts.crossplane.io/stable

Install Crossplane into the crossplane-system Namespace.

helm install crossplane \

crossplane-stable/crossplane \

--namespace crossplane-system \

--create-namespace

Adding The Azure Provider

First, add the Crossplane Provider.

apiVersion: pkg.crossplane.io/v1

kind: Provider

metadata:

name: upbound-provider-azure

spec:

package: xpkg.upbound.io/upbound/provider-azure:v0.29.0

You can confirm the provider exists with the get providers command.

kubectl get providers

Run the following to create an Azure App Registration for authentication from Crossplane to Azure, copy the output, and save it to a file called azure.json .

az ad sp create-for-rbac \

--sdk-auth \

--role Owner \

--scopes /subscriptions/your_sub_id

Create a secret with the Azure App Registration information for authentication and authorization purposes.

kubectl create secret \

generic azure-secret \

-n crossplane-system \

--from-file=creds=./azure.json

Create the Provider Config below that updates the Crossplane installation on your Kubernetes cluster to use the new secret that you just created for authentication/authorization to Azure.

You may see an error about CRD’s when trying to run the below. If you do, wait another 3-5 minutes. It could be because the installation of Crossplane hasn’t fully configured the CRD’s needed for this particular object/resource from the Azure Upbound/Crossplane API.

apiVersion: azure.upbound.io/v1beta1

metadata:

name: default

kind: ProviderConfig

spec:

credentials:

source: Secret

secretRef:

namespace: crossplane-system

name: azure-secret

key: creds

Last but not least, create the Virtual Network.

apiVersion: network.azure.upbound.io/v1beta1

kind: VirtualNetwork

metadata:

name: vnet

spec:

forProvider:

addressSpace:

- 10.0.0.0/16

location: "East US"

resourceGroupName: your_rg_name