Gemma 3: Google's Open Multimodal Model with Long Context & Vision

Cover image from Google Blog. Google DeepMind has officially introduced Gemma 3, the latest...

Cover image from Google Blog .

Google DeepMind has officially introducedGemma 3, the latest iteration of its open-source language model series. This release brings significant improvements, including multimodal capabilities, extended context lengths, and enhanced multilingual performance. With sizes ranging from1 billion to 27 billion parameters, Gemma 3 is designed for efficient deployment on consumer-grade hardware while delivering state-of-the-art performance. It also outperforms Llama3-405B, DeepSeek-V3 and o3-mini in human preference evaluations on the LMArena leaderboard.

Key Features of Gemma 3

1.Multimodal Capabilities

One of the biggest additions to Gemma 3 isvision understanding. Unlike previous versions, Gemma 3 can process images through a customSigLIP vision encoder. This encoder converts images into a fixed-size vector representation that the language model interprets as soft tokens.

- Efficiency Boost: Instead of processing every pixel, Gemma 3 condenses vision embeddings into256 vectors.

- Flexible Resolution: Uses aPan & Scan (P&S) method, inspired by LLaVA, to process high-resolution images and non-square aspect ratios efficiently.

- Applications: Ideal for image captioning, document understanding, and visual question answering.

2.Long Context: Up to 128K Tokens

Gemma 3 significantly increases its context length compared to previous versions, supporting up to128,000 tokens(except the 1B model, which supports 32K tokens). Handling such long contexts efficiently requires key architectural optimizations:

- Hybrid Attention Mechanism: Implements a 5:1 ratio of local-to-global attention layers, reducing memory usage while maintaining performance.

- Memory Optimization: Adjusts KV-cache memory to prevent the typical memory explosion seen in long-context models.

- RoPE Scaling: IncreasesRoPE (Rotary Position Embeddings) base frequencyfrom 10K to 1M for global attention layers.

3.Architecture & Efficiency Improvements

- Grouped-Query Attention (GQA): Enhances inference speed while reducing memory footprint.

- QK-Norm for Stability: Replaces soft-capping with QK-Norm formore stable training.

- Quantization Aware Training (QAT): Providesint4, int8, and float8 quantized versionsto optimize memory usage.

4.Enhanced Multilingual Support

Gemma 3 enhances its multilingual capabilities by revisiting its training data mixture and adopting theGemini 2.0 tokenizer:

- Expanded Vocabulary: Supports262K token entriesfor better non-English language processing.

- Balanced Data Strategy: Uses animproved language distribution techniqueto avoid overfitting to English.

- Better Handling of Non-English Scripts: Works well with languages that require byte-level encoding.

5.Instruction-Tuned Models (IT) with SOTA Performance

The instruction-tuned (IT) models of Gemma 3 undergo anadvanced post-training pipeline, incorporatingknowledge distillation, reinforcement learning (RLHF), and dataset filtering.

- New Post-Training Approach: LeveragesBOND, WARM, and WARPtechniques for instruction tuning.

- Mathematics & Reasoning Boost: Outperforms prior models onmath, code, and reasoning benchmarks.

- Reduced Hallucinations: Implementsin-context attribution techniquesto minimize factual errors.

Performance Benchmarks ????

Gemma 3 achieves impressive results across various AI benchmarks:

| Benchmark | Gemma 3 27B | Gemma 2 27B | Improvement |

|---|---|---|---|

| MMLU-Pro | 67.5% | 56.9% | ✅ +10.6% |

| LiveCodeBench | 29.7% | 20.4% | ✅ +9.3% |

| Bird-SQL (dev) | 54.4% | 46.7% | ✅ +7.7% |

| FACTS Grounding | 74.9% | 62.4% | ✅ +12.5% |

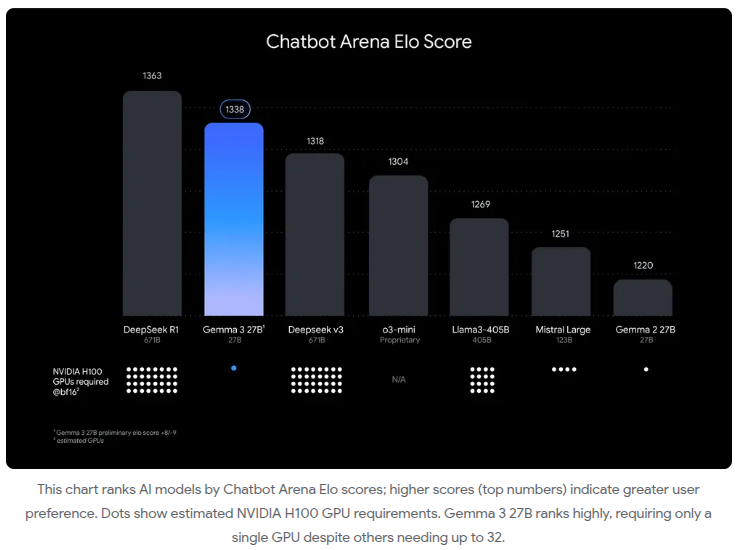

LMSYS Chatbot Arena Ranking ????

Gemma3-27B-IT ranked #9 globally in the LMSYS Chatbot Arena, achieving anElo score of 1338. This puts it ahead of:

- DeepSeek-V3 (1318)

- LLaMA 3 70B (1257)

- Qwen2.5-72B (1257)

Why Gemma 3 is Important

Gemma 3 sets a new benchmark for open-source AI by combining multimodal capabilities, efficient long-context processing, and enhanced multilinguality. Unlike larger proprietary models,Gemma 3 can run efficiently on consumer hardware, making it an excellent choice for:

- Developersbuilding chatbots, coding assistants, and research tools.

- Researcherslooking for an open-source model with cutting-edge performance.

- AI Enthusiastsexploring multimodal interactions and instruction tuning.

In conclusion…

Gemma 3 is a major step toward open AI innovation. With its improved efficiency, multimodal reasoning, and long-context abilities, it's clear that Gemma 3 is one of the best open models available today (we'll see about tomorrow...).

Read the full research paper: